Last Updated on April 18, 2026 by KnownSense

You have learned about the OWASP Top 10 risks for LLMs. You know what can go wrong. Now the question is: how do you make sure it doesn’t? The answer is LLMOps governance and compliance — in other words, the rules, roles, and processes that keep AI systems safe, accountable, and legally compliant. In this article, you will find everything you need to set up proper LLMOps governance, from internal policies to regulatory compliance to automated workflows.

What Is LLM Governance?

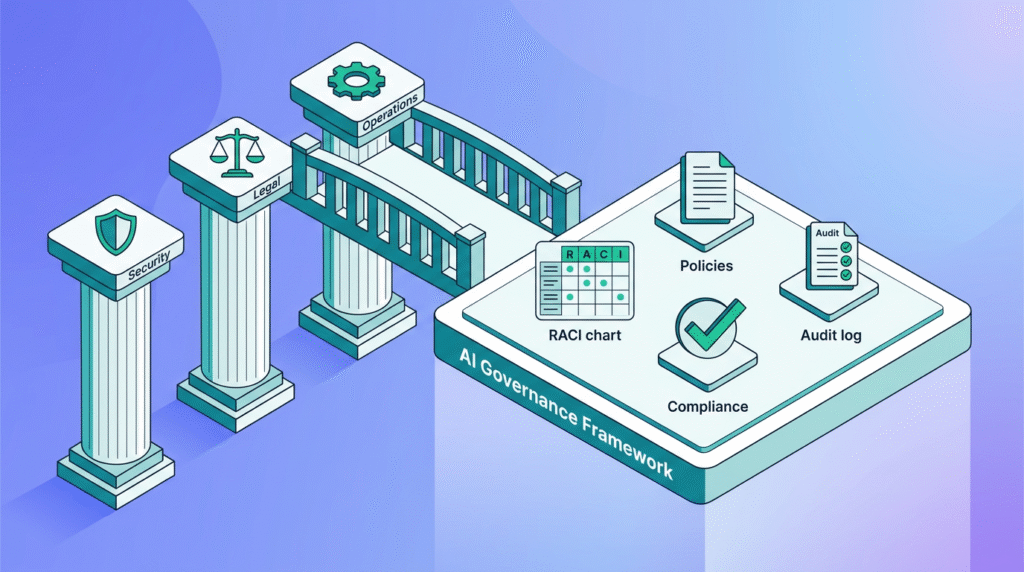

First, let’s define the term. LLM governance refers to the structures and policies that control how organizations develop, deploy, and manage AI models.

Here is a simple way to think about it: security tells you what to protect against. On the other hand, governance tells you who is responsible, what the rules are, and how to enforce them.

Most importantly, without governance, even the best security controls will eventually fail because nobody owns them.

The Six Pillars of AI Governance

Now that we understand what governance means, let’s break it down into six essential pillars.

1. AI Responsibility Matrix (RACI Chart)

Every AI project needs clear ownership. A RACI chart answers four questions for every task:

| Role | Question |

|---|---|

| R — Responsible | Who does the work? |

| A — Accountable | Who makes the final decision? |

| C — Consulted | Who provides input? |

| I — Informed | Who needs to know? |

Why does this matter? When something goes wrong, you need to know immediately who to call and who has the authority to act. Without this clarity, teams waste critical time during incidents.

2. AI Risk Documentation

Next, you should document every known risk and assign an owner to each one. Keep in mind that this is not a one-time exercise — instead, you should update it regularly as your AI systems evolve.

- Risk description — what could go wrong

- Likelihood — how probable is this risk

- Impact — what happens if it occurs

- Owner — who is responsible for mitigation

- Controls — what safeguards are in place

- Status — current state of each mitigation

3. Data Management Policies

Furthermore, data is the fuel for LLMs. If you do not control how your team classifies, stores, and uses data, your model will eventually mishandle something sensitive.

- Data classification — label data by sensitivity level (public, internal, confidential, restricted)

- Usage limitations — define which data types can be sent to which models

- Retention rules — specify how long data is kept and when it must be deleted

- Access controls — enforce who can access what data and under what conditions

4. AI Usage Policies

In addition to data policies, you need clear AI usage policies that cover:

- Acceptable use — what employees can and cannot do with AI tools

- Approved tools — which AI platforms and models your organization allows

- Prohibited actions — what employees must never enter into an AI system (for instance, customer SSNs, passwords, or trade secrets)

- Output handling — how your team should review and use AI-generated content

For instance, publish an acceptable use matrix that employees can reference quickly:

| AI Tool | Internal User | Customer-facing | Code Generation | Data Analysis |

|---|---|---|---|---|

| Internal Copilot | Yes | No | Yes | Yes |

| External chatGPT | Limited | No | Review Requested | No |

| Custom RAG System | Yes | Yes | N/A | Yes |

5. Data Source Management

Similarly, you should track and document every data source your LLM touches. Ask yourself these questions:

- Where does the training data come from?

- Who owns the data?

- What licenses apply?

- How often does the team refresh the data?

- Who approved its use?

As a result, you create a clear audit trail. Consequently, when a regulator asks “where did this model get its information?”, you can answer immediately.

6. Alignment with Company Values

Finally, AI policies should not exist in isolation. Instead, they must align with your organization’s broader values, ethics guidelines, and legal obligations. Therefore, ask these questions regularly:

- Does our AI use reflect our company’s stated values?

- Are we transparent with users about when they interact with AI?

- Do we have a process for addressing AI-related complaints?

- Are we testing for bias and fairness on a regular schedule?

Legal and Regulatory Compliance

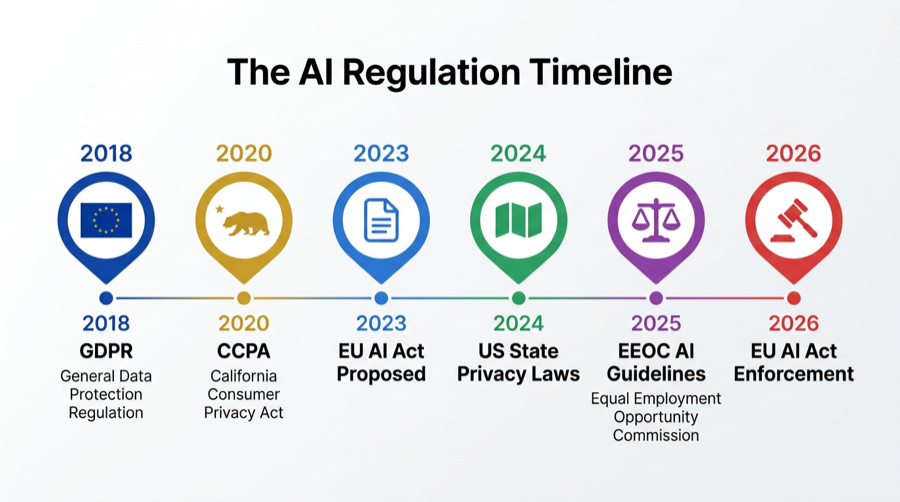

Now let’s shift to the legal side. Beyond internal governance, your organization must also navigate a growing web of regulations.

The Regulatory Landscape

AI regulation is evolving fast. Here are the key frameworks your organization should track:

| Regulation | Region | Key Focus |

|---|---|---|

| EU AI Act | European Union | Risk-based classification, transparency, prohibited AI uses |

| GDPR | European Union | Personal data protection, consent, right to explanation |

| CCPA/CPRA | California, USA | Consumer privacy rights, data deletion, opt-out |

| State Privacy Laws | Multiple US States | Varying consumer protection requirements |

| EEOC Guidelines | USA | Fair hiring practices when using AI |

Critical Legal Areas to Address

Product Warranties and Liability

To begin with, your organization should:

- Clearly state who takes responsibility when an AI system produces wrong or harmful output

- Update terms and conditions to address AI-generated content

- Define ownership of AI-generated outputs

Intellectual Property

Moreover, LLMs can create content that resembles copyrighted, trademarked, or patented material. Therefore, protect yourself by:

- Reviewing contracts with AI vendors for indemnification clauses

- Establishing clear IP ownership for AI-generated code and content

- Monitoring outputs for potential IP infringement

End-User License Agreements (EULAs)

Likewise, update your EULAs to cover:

- How your system processes and stores user prompts

- Rights and limitations on AI-generated outputs

- Protection against liability from AI-produced bias or plagiarism

Employment and Hiring

Additionally, if you use AI in hiring or employee management:

- Verify that the system does not discriminate based on protected characteristics

- Obtain proper consent for data collection (especially biometric data like facial recognition)

- Define clear data retention and deletion policies for candidate information

Important: Federal agencies (EEOC, FTC, DOJ Civil Rights Division) are actively scrutinizing AI in hiring. As a consequence, non-compliance can lead to significant penalties.

Timeline graphic: Key AI regulations from GDPR (2018) to EU AI Act (2026)

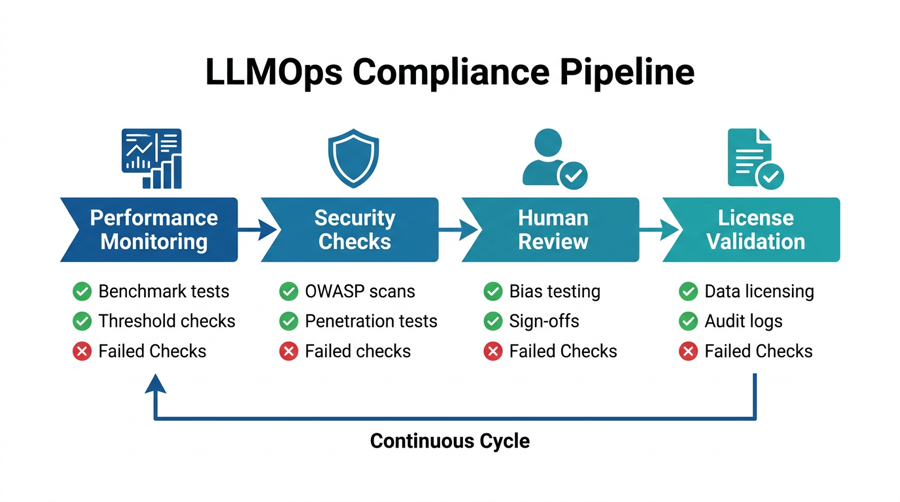

Operationalizing Compliance: From Policy to Practice

However, policies on paper mean nothing without enforcement. In this section, you will learn how to automate compliance using modern DevOps workflows. Think of compliance as a pipeline — just like your CI/CD pipeline for code. In short, every model update, data refresh, and deployment should pass through automated checks. Here are the four stages of automated compliance:

Stage 1: Performance Monitoring

First, set up automated benchmarks that run continuously:

- Accuracy, precision, recall, and F1 scores — measure these against a validation dataset

- Performance degradation alerts — trigger notifications when metrics drop below your thresholds

- Automatic rollback — revert to the previous model version if quality drops significantly

For example, here is a typical workflow:

Model Update → Run Benchmark Tests → Check Thresholds

Pass ? → Pass Deploy

Fail ? → Alert Team + RollbackStage 2: Security Checks

After performance checks pass, run security validations before deploying any model update:

- Scan for vulnerabilities against the OWASP Top 10 for LLMs

- Execute penetration tests on AI-connected endpoints

- Confirm that access controls and redaction layers work correctly

Stage 3: Human Review Checkpoints

Nevertheless, not everything can or should be automated. Therefore, build human review into these critical stages:

- After model updates — verify that behavior changes are intentional

- After training on new data — check for bias and quality regressions

- Before production deployment — obtain sign-off from security and product leads

- Before data-driven releases — have the legal team review licensing and compliance

Stage 4: Licensing and Data Validation

Lastly, automate these checks to close the loop:

- Training data licenses — verify that all datasets comply with their license terms

- Model licenses — confirm that the model follows its terms of service

- Feature store compliance — ensure your team handles sensitive data according to privacy regulations

- Audit logs — generate traceability records for compliance reviews

Sample Automation: Airflow DAG

To illustrate, here is a practical example using Apache Airflow to automate compliance checks:

from datetime import timedelta

from airflow import DAG

from airflow.operators.python_operator import PythonOperator

from airflow.operators.email_operator import EmailOperator

from airflow.utils.dates import days_ago

def calculate_performance_metrics():

"""Run model benchmarks and return scores."""

metrics = {

"accuracy": 0.95,

"precision": 0.92,

"recall": 0.93,

"F1": 0.92

}

return metrics

def check_performance_metrics(**kwargs):

"""Alert if model performance drops below threshold."""

ti = kwargs['ti']

metrics = ti.xcom_pull(task_ids='calculate_performance_metrics')

if metrics["F1"] < 0.90:

raise ValueError(

"Model F1 score below threshold. Review required."

)

dag = DAG(

'llm_performance_and_compliance',

default_args={

'owner': 'airflow',

'retries': 1,

'retry_delay': timedelta(minutes=5),

},

description='Automated LLM performance and compliance checks',

schedule_interval=timedelta(days=1),

start_date=days_ago(1),

)

calculate = PythonOperator(

task_id='calculate_performance_metrics',

python_callable=calculate_performance_metrics,

dag=dag,

)

check = PythonOperator(

task_id='check_performance_metrics',

python_callable=check_performance_metrics,

provide_context=True,

dag=dag,

)

notify = EmailOperator(

task_id='send_compliance_notification',

to=['compliance@example.com'],

subject='LLM Compliance Check Complete',

html_content="""

<h3>Daily LLM Compliance Report</h3>

<p>Performance and compliance checks completed successfully.

Review the dashboard for detailed metrics.</p>

""",

dag=dag,

)

calculate >> check >> notifyThis DAG runs daily and:

- Calculates model performance metrics

- Checks them against defined thresholds

- Sends a compliance report to the review team

In addition, you can extend this pattern to include security scans, license validation, and bias detection.

Putting It All Together: The Governance Framework

So, how do all the pieces connect? Here is a summary of the complete framework:

| Layer | What It Covers | Who Owns It |

|---|---|---|

| Policy | AI usage rules, acceptable use, data classification | Legal + Leadership |

| Roles | RACI chart, responsibility assignments | Product + Engineering |

| Security | OWASP controls, access management, redaction | Security + Engineering |

| Compliance | GDPR, EU AI Act, licensing, audit trails | Legal + Compliance |

| Operations | Automated pipelines, monitoring, alerting | MLOps + Engineering |

| Review | Human checkpoints, bias testing, sign-offs | Cross-functional |

Conclusion

In conclusion, governance is not optional — without clear ownership and policies, even the best security controls will eventually fail. As a result, organizations must automate their compliance checks because manual processes simply do not scale with growing AI adoption. At the same time, however, automation alone is not enough. Although it helps streamline operations, not everything can or should run without human oversight, so building review checkpoints into your pipeline is equally important. Furthermore, staying ahead of regulation is critical since the EU AI Act, GDPR, and state privacy laws continue to evolve rapidly, and falling behind can lead to costly penalties. Above all, document everything you do — ultimately, when regulators come asking questions, your audit trail will be your strongest defense.