Last Updated on February 10, 2026 by KnownSense

For years, most of us used machine learning that classified, labeled, or predicted from patterns. Then something shifted. In 2022, ChatGPT showed millions of people that AI could create content—not just recognize it. That shift from pattern recognition to content generation is what defines this moment. Today you can get text, images, video, and code by describing what you want in plain language. As developers, we’re no longer typing every line ourselves. Tools like Copilot, ChatGPT, Claude, and Gemini can turn documentation or a short description into working code in a way that wasn’t realistic a few years ago. We’re working with systems that generate code, summaries, visuals, and strategic advice—and that advice is often useful. To use these tools well and stay competitive, you need more than surface-level prompting: you need a clear mental model of how generative AI works. In this article, we’ll walk through the core concepts behind generative AI so you can work with these systems with more control, confidence, and clarity.

The Core Engine: Transformers

The first of these core concepts is the architecture behind today’s models: the transformer. Transformers are neural networks built to understand and generate sequences—sentences, code, or even music. They let models process long input sequences and produce coherent, context-aware responses. Unlike older models that handle data step by step, transformers look at the whole input at once. That ability—global context—is what gives them their power.

Core concept

Self‑attention

At the heart of the transformer is self-attention. It is the mechanism that lets the model decide which parts of the input matter most for the token it’s generating. For each word it reads, the model asks: Which other words help me understand this one? It scores those words, weighs them, and blends the most important ones into the current prediction. Think of it as reading with a highlighter—except the model learns what to highlight.

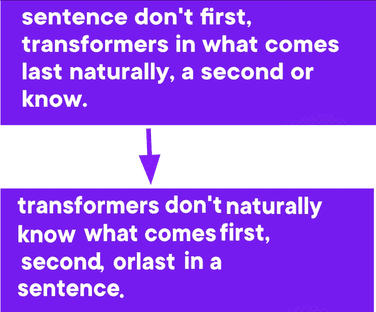

Positional encoding

Self‑attention isn’t enough; we also need positional encoding.

Positional encoding solved this by adding a special pattern to each token based on its position, so that “the cat sat” and “sat the cat” don’t get confused, even if the words are the same. Together, self‑attention and positional encoding help the model both understand relevance and retain order, and that is how transformers generate fluent and coherent language.

Why Self‑attention and Positional encoding matter?

Before transformers, sequence modeling relied mainly on recurrent neural networks (RNNs) and convolutional neural networks (CNNs). RNNs process tokens one at a time and pass a hidden state forward, which makes them slow to train and prone to forgetting earlier context (the vanishing-gradient problem). CNNs excel at local patterns and parallel computation over fixed windows, but their receptive field is limited, so they struggle with long-range dependencies. Transformers avoid both issues: they use self-attention to look at all positions in the sequence at once, so every token can directly depend on every other token. Positional encodings inject order into this otherwise permutation-invariant mechanism, so the model knows where each token is, not just what it is. Together, self-attention and positional encoding are why transformers handle long-range dependencies better than RNNs or CNNs, and why modern LLMs can take a long prompt (e.g., hundreds or thousands of words) and produce coherent, contextually grounded answers. Understanding these two ideas gives you the core of how today’s large language models “think.”

Modern Transformer Innovations

Transformers are powerful, but scaling them has historically been expensive. That cost has driven a wave of new techniques since they debuted in 2017. Today’s models from OpenAI, Claude, Gemini, and others are not only bigger but smarter, faster, and more efficient because of those innovations. To make sense of how we got here, it helps to focus on the core concepts that underpin modern architectures: efficient attention mechanisms, and how we train, optimize, and scale these models. We’ll walk through each of these in turn.

FlashAttention

It is the breakthrough that made larger models faster and more memory-efficient. Traditional self‑attention was powerful, but it was very slow. FlashAttention rewrote how that math gets done, moving key calculations into faster GPU memory, such as RAM, and minimizing data movement. It’s like going from longhand to lightning‑fast shorthand. Thanks to FlashAttention, we now have longer context windows—in some cases up to 1 million tokens—faster inference, and lower costs for deployment.

This innovation is now standard in models like GPT‑4, GPT‑5, Claude 3.5, and other newer models.

Grouped‑Query Attention

Another major improvement is GQA. In traditional multi‑head attention, each attention head computed its own keys and values, which was very expensive. GQA lets multiple heads share keys and values, reducing compute and memory usage without sacrificing quality. In simple terms, it’s like a classroom where several students share the same notes instead of each writing their own, saving time and effort while still learning the lesson. That is what powers OpenAI’s GPT‑4 and Gemini 5 models—models that are not just smart but very efficient at scale.

Mixture of Experts

Imagine a model that doesn’t use all of its neurons at once; it activates only the ones it needs. It’s similar to how some newer car engines work—you may have seen V8s that, when idling, use only four cylinders. That’s the same idea. The idea behind MoE is that each prompt activates a subset of the model’s parameters, enabling massive scale (trillions of parameters) without proportional compute. MoE models are like expert teams: only the relevant experts get called in. Some examples include Mistral’s Mixtral, Google’s Gemini 1.5, Meta’s Llama 3 MoE variants, and others.

Together, these innovations make transformers more than just large—they make them practical. Today you can run a multimodal model with a million-token context window, share memory across attention heads, and scale to expert‑level performance, all on infrastructure that’s cheaper and faster than what we had just one year ago. So when people say transformers have peaked, remind them: it’s not about size, it’s about smarter architecture. FlashAttention, Grouped‑Query Attention, and Mixture of Experts aren’t buzzwords; they’re what make modern generative AI possible in production today.

Conclusion

Generative AI has moved from pattern recognition to content generation, and transformers are the engine behind that shift. Self-attention and positional encoding give models the ability to weigh context and preserve order, which is why they outperform earlier RNNs and CNNs on long sequences. Modern innovations—FlashAttention, Grouped‑Query Attention, and Mixture of Experts—have made these models faster, more efficient, and practical at scale. Understanding these core concepts gives you a clear mental model for how today’s LLMs work and how to use tools like Copilot, ChatGPT, Claude, and Gemini with more control and confidence. What more we will read next are Gen AI across modalities (what developers need to know), LLMs for summarization and research, coding with Gen AI beyond autocomplete, image and video generation models, and multimodality—connecting text and vision.